“Serious Game” and XR Training Simulation Prototype

Traditional training for Cabin Crew Members (TCP) relies heavily on theoretical manuals and static classroom exercises, which fail to convey the spatial reality and intense stress of an actual emergency. While physical airplane simulators exist, they are prohibitively expensive and logistically complex. When real emergencies strike, crew members often face cognitive overload from visual chaos, blaring alarms, and passenger panic, leading to delayed decision-making.

A “Proof of Concept” for Experiential Learning

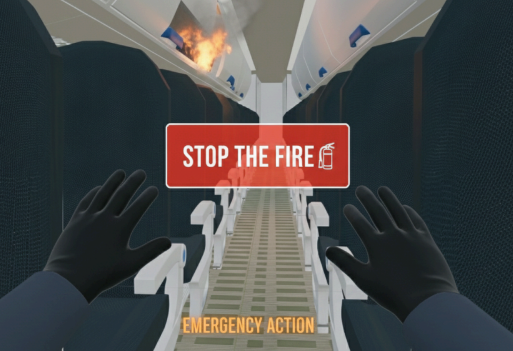

SafeFlight VR was developed as a high-fidelity prototype to test the viability of using standalone VR for experiential learning (“learning by doing”). By placing trainees in a 1:1-scale replica of a Boeing 737 cabin, this proof-of-concept provides a psychologically safe space in which errors become lessons rather than real-world dangers. The core objective of this initial build was to design a system that reduces reaction times and transforms theoretical protocols into instinctive actions.

Key UX & Technical Innovations Tested in the Prototype:

Cognitive Load Management: The prototype utilizes a progressive difficulty system, starting with standard procedures and gradually introducing variables like auditory alarms and smoke, allowing users to build stress resilience step-by-step.

Diegetic Interfaces for Deep Immersion: To prevent breaking presence, SafeFlight VR avoids traditional floating menus. Instead, information is integrated directly into the virtual environment using diegetic UI elements, such as instructions appearing on a realistic crew tablet.

Embodied & Accessible Interaction: The experience relies on natural hand-tracking (grabbing oxygen masks, pulling emergency levers) rather than complex button inputs. To ensure accessibility and prevent cybersickness among first-time VR users, movement is optimized through safe teleportation mechanics and restricted physical play spaces.

Version 1.0 Scope & Future Roadmap

As an initial prototype, the current build focuses on a single main scenario designed to test the core gameplay loop: Perception -> Decision -> Action -> Feedback. The interactions are kept deliberately simple to focus on cognitive training rather than complex mechanics